Abstract:

Breiman (2001, Statistical Science) urged statisticians to provide tools when the data, X, is obtained from a sampler, f(θ,Y); f is known, parameter θεΘ, Y is random, either observed or latent. Discussants of the paper, D. R. Cox and B. Efron, looked at the problem as X-prediction, surprisingly neglecting the statistical inference for θ, and disagreed with the main thrust of the paper. Consequently, mathematical statisticians ignored Breiman’s suggestion! However, computer scientists work in Breiman’s problem calling f(θ, Y) learning machine. In this talk, following Breiman’s suggestion, statistical inference tools are presented for data X=f(θ,Υ): a) the Empirical Discrimination Index (EDI), to detect θ-discrimination and identifiability, b) Matching estimates of θ with upper bounds on the errors that depend on the “massiveness” of Θ, c) an approximate posterior inclusive of all θ* drawn from a Θ-sampler, unlike the Rubin (1984, Annals of Statistics) ABC-rejection method followed until now. The results in a) are unique in the literature (YY, JCGS, 2023). Mild assumptions are needed in b) and c). Unlike existing results that need often strong and unverifiable assumptions, the errors upper-rate in b) is independent of the data dimension. When Θ is subset of Rm, m unknown, the upper-rate can be [mn (log n)/n]1/2 in probability, with mn increasing to infinity as slow as we wish; when m is known, mn =m. Approximate posteriors in c) are obtained for any data dimension. When X=f(θ,Υ) and a c.d.f., Fθ, for X is assumed, one may be better off using the Sampler and a)-c) since Fθ may be wrong!

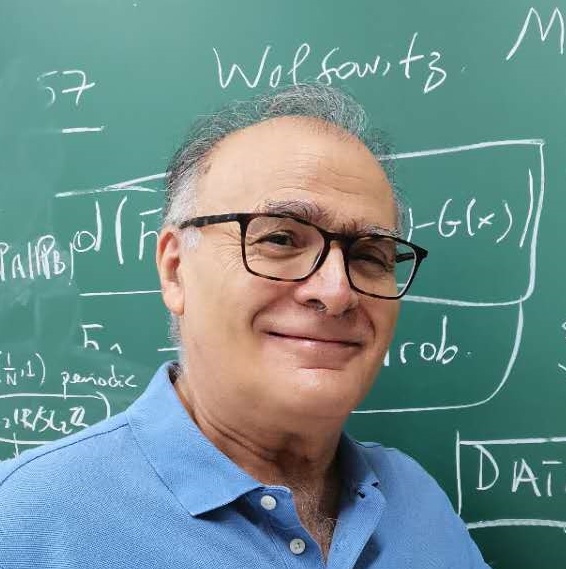

About the Speaker:

Yannis Yatracos obtained his Ph.D. from UC Berkeley under Lucien Le Cam. He is Professor of Statistics at Yau Mathematical Sciences Center, Tsinghua University. Previous regular appointments include Rutgers, Columbia, UCSB, University of Montreal, University of Marseille (at Luminy), Cyprus U. of Technology and the National University of Singapore (NUS). Yannis started his research in density estimation, with calculation of rates of convergence via Minimum Distance methods. He extended these results in non-parametric regression and continued his research in other areas of Statistics and in Actuarial Science. Yannis investigated in particular pathologies of plug-in-methods, like the bootstrap and the MLE, and the limitations of Wasserstein MDE. His current recent interests include: a) Cluster and Structure Detection for High Dimensional Data, and b) Statistical Foundations of Data Science, in particular for Learning Machines. Yannis is IMS and ASA Fellow, Elected ISI member and was Associate member of the Society of Actuaries (U.S.A).

Your participation is warmly welcomed!

欢迎扫码关注北大统计科学中心公众号,了解更多讲座信息!